Monitoring Cloudflare Logs with ClickStack

This guide shows you how to ingest Cloudflare logs into ClickStack using ClickPipes. You'll configure event-driven ingestion via SQS and set up ClickPipes to continuously ingest logs from S3 into ClickHouse.

A demo dataset is available if you want to test before configuring production.

Time Required: 15-20 minutes

Integration with existing Cloudflare Logpush

This section assumes you have Cloudflare Logpush configured to export logs to S3. If not, follow Cloudflare's AWS S3 setup guide first.

Prerequisites

- ClickStack instance running

- Cloudflare Logpush actively writing logs to an S3 bucket

- AWS permissions to create SQS queues and IAM roles

- S3 bucket name and region where Cloudflare writes logs

Create SQS queue

Create an SQS queue to receive notifications when Cloudflare uploads new log files to S3.

Via AWS Console:

- Navigate to SQS → Create queue

- Type: Standard

- Name:

cloudflare-logs-queue - Click Create queue

- Copy the Queue URL

Configure access policy:

Select your queue → Access policy tab → Edit → Replace with:

Replace REGION, ACCOUNT_ID, and YOUR-BUCKET-NAME with your values.

Configure S3 event notifications

Configure your S3 bucket to notify the queue when new files arrive.

- S3 bucket → Properties → Event notifications → Create event notification

- Name:

cloudflare-new-file - Event types: ✓ All object create events

- Destination: SQS queue → Select

cloudflare-logs-queue - Click Save changes

Create IAM role for ClickPipes

ClickPipes needs permission to read from S3 and consume SQS messages.

Get ClickHouse Cloud IAM ARN:

- ClickHouse Cloud Console → Settings → Network Security Information

- Copy the IAM Role ARN

Create IAM role:

- AWS Console → IAM → Roles → Create role

- Trusted entity: Custom trust policy

- Paste:

- Role name:

clickhouse-clickpipes-cloudflare - Click Create role

Add permissions:

- Select role → Add permissions → Create inline policy

- Paste:

- Policy name:

ClickPipesCloudflareAccess - Copy the Role ARN

Create ClickPipes job

- ClickHouse Cloud Console → Data Sources → Create ClickPipe

- Source: Amazon S3

Connection:

- Bucket: Your Cloudflare logs bucket

- Region: Your bucket region

- Authentication: IAM Role → Paste Role ARN from previous step

Ingestion:

- Mode: Continuous

- Ordering: Any order

- ✓ Enable SQS

- Queue URL: Paste from Step 1

Schema:

ClickPipes auto-detects schema from your logs. Review and adjust field types as needed.

Example schema:

Click Create ClickPipe

Demo dataset

For users who want to test before configuring production, we provide sample Cloudflare logs.

Download sample dataset

The dataset includes 24 hours of HTTP requests with realistic patterns covering traffic spikes, security events, and geographic distribution.

Verify demo data

Dashboards and visualization

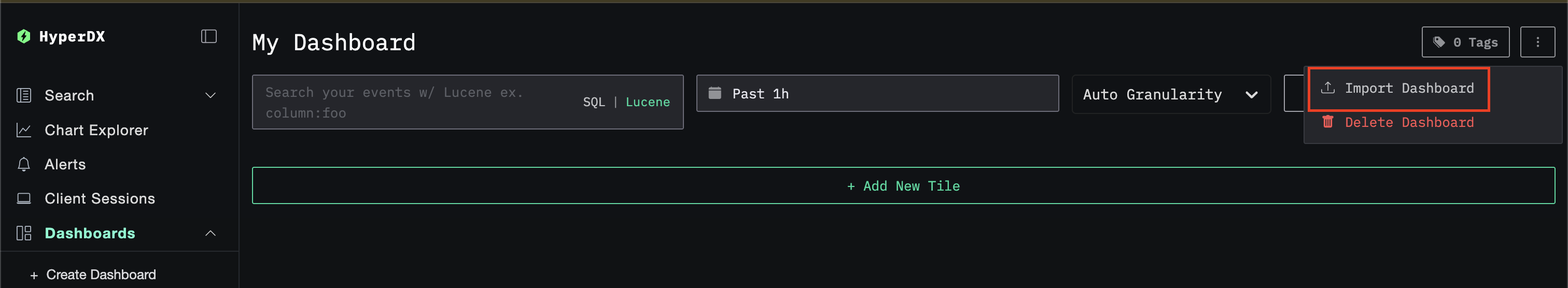

Import dashboard

- HyperDX → Dashboards → Import Dashboard

- Upload

cloudflare-logs-dashboard.json→ Finish Import

View dashboard

The dashboard includes:

- Request rate and traffic volume

- Geographic distribution

- Cache hit rates

- Error rates by status code

- Security events

Troubleshooting

No files appearing in S3

Verify Cloudflare Logpush is active:

- Cloudflare Dashboard → Analytics & Logs → Logs → Check job status

Generate test traffic:

Wait 2-3 minutes and check S3.

SQS not receiving messages

Verify S3 event notification:

- S3 bucket → Properties → Event notifications → Confirm configuration exists

Test SQS policy:

ClickPipes not processing files

Check IAM permissions:

- Verify ClickHouse can assume the role

- Confirm S3 and SQS permissions are correct

View ClickPipes logs:

- ClickHouse Cloud Console → Data Sources → Your ClickPipe → Logs

Data not appearing in ClickHouse

Verify table exists:

Check for schema errors:

Next steps

- Set up alerts for security events

- Optimize retention policies based on data volume

- Create custom dashboards for specific use cases

Going to production

For production deployments:

- Enable daily subfolders in Cloudflare Logpush for better organization

- Configure SQS Dead Letter Queue for failed message handling

- Set up CloudWatch alarms for queue depth monitoring

- Review partitioning strategy based on query patterns